The simple script below shows how to combine csv files without duplicating headings using C#. This technique assumes that all of the files have the same structure, and that all of them contain a header row.

using System.IO;

using System.Linq;

namespace CombineCsvFiles

{

class Program

{

static void Main(string[] args)

{

var sourceFolder = @"C:\csv_files";

var destinationFile = @"C:\csv_files\csv_files_combined.csv";

// Wildcard search returns files with 1-2 digit suffix

string[] filePaths = Directory.GetFiles(sourceFolder, "csv_file_??.csv");

StreamWriter fileDest = new StreamWriter(destinationFile, true);

int i;

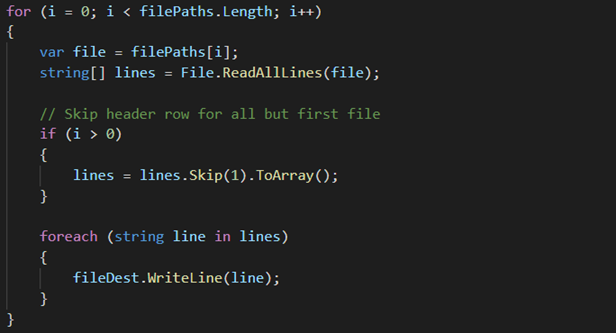

for (i = 0; i < filePaths.Length; i++)

{

var file = filePaths[i];

string[] lines = File.ReadAllLines(file);

// Skip header row for all but first file

if (i > 0)

{

lines = lines.Skip(1).ToArray();

}

foreach (var line in lines)

{

fileDest.WriteLine(line);

}

}

fileDest.Close();

}

}

}